Cylance, the next big thing in VDI endpoint protection?

Anti-virus and threat analysis are hot topics these days. This because of the increasing need for more powerful and more performant IT security solutions. Obviously, the release of the new Endpoint security software CylancePROTECT got our attention. This solution has some very interesting features and it is supposed to have an agent that consumes less than 1% CPU performance. This all sounds very promising, but is it true what they say? We have examined it for you…

A comparison of Cylance with other traditional anti-virus

In the beginning, we had no available test environment in our lab. Therefore, we created a LoginVSI test environment on our Nutanix-based development lab in order to compare CylancePROTECT with traditional anti-virus. The difference between Cylance and traditional Anti-Virus is that Cylance uses artificial intelligence and machine learning to stop malware and other treats instead of the traditional pattern-based scanning, which is more CPU intensive.

We used the following setup on our 4-node Nutanix environment (total of 223.9 Ghz, 1TB DDR3).

All tests where done using Login VSI 4.1.7.13 on a pool of 20 machines with a direct desktop connection:

- The knowledge worker workload

- 2 vCPU per desktop VM

- Windows 10×64 has been used

- Updates were installed on the VMs

- Office PRO 2013×64

- Internet Explorer 11

- Local Profile

- Cylance agent 1.2.1380 x64

- Cylance was run in the most restrictive setting, with all features enabled

All results were given a VSIbaseline average response time (ms) score, that is based on the VSImax. VSImax is the ‘Virtual Session Index (VSI)’. With Virtual Desktop Infrastructure (VDI) and Terminal Services (RDS) workloads, this is valid and useful information. This index simplifies comparisons and makes it possible to understand the true impact of configuration changes on hypervisor host or guest level. (LoginVSi website).

The baseline we achieved without any Anti-virus installed on the VMs was a VSIbaseline average response time score of 714.

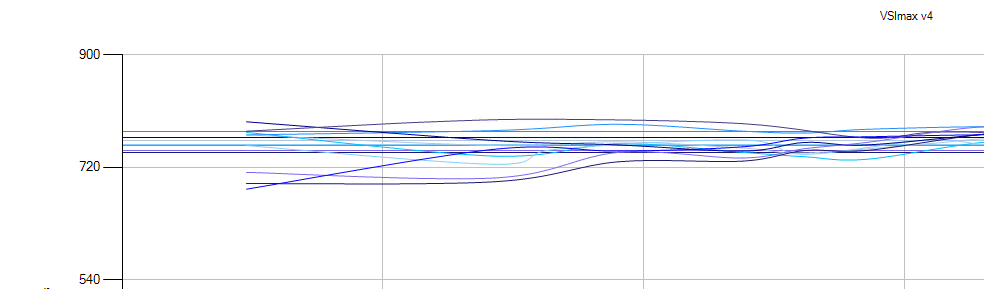

Afterwards we installed CylancePROTECT, and we did more than 10 tests. This was the average score:

The tests showed us a very promising VSIbaseline average response time score of 760, the overall deviation of the baseline tests was only 6%. This indicates that Cylance has almost no impact on the system.

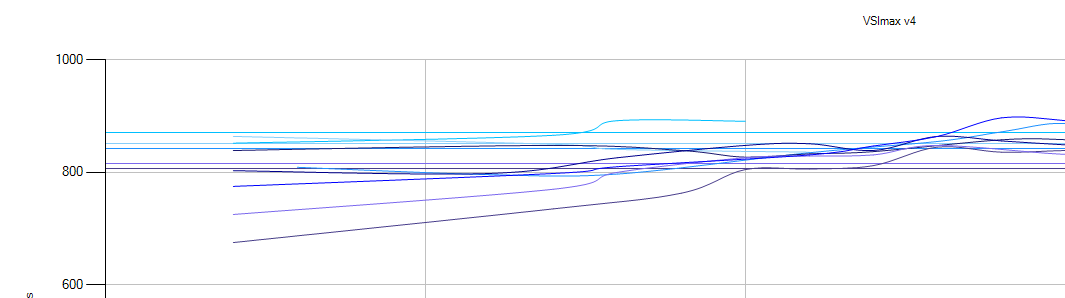

The traditional AV was next; we did the same tests as before and obtained the following results:

The overall average that emerged from the tests was 850. This is an increase of 19% compared to the baseline. We noted that the results were more variable. This is also visible on the chart because the lines (avg. of 1 test) are further apart. The cause of this fluctuation is the constant background scanning that was performed, which, in its turn, decreased the overall performance.

Conclusion

Based on this research, we expect a positive future for Cylance. The results differ only by 6% from the baseline. That is a big difference compared to the 19% of the other traditional AV. The difference can be mainly attributed to the fact that traditional AV immediately launched a preemptive scan of the filesystem. Cylance on the other hand, uses its revolutionary new features making a full system scan less essential. That will have less performance impact on the system.

Unfortunately, we were unable to extract the avg. CPU percentage from the test, but the 13% difference in VSIbaseline average response time (ms) score shows us that Cylance has indeed a much lower profile (fewer resources used) on the VM than the traditional AVs. This is what VDI is all about, increasing the amount of VDI sessions on your existing systems and still maintaining the same user experience with great performance.

We would like to state that the tests using LoginVSI could be optimized. The scale of our desktop test pool could have been increased, giving us a very different result. However, due to the limitations of a trial license provided by LoginVSI, we were maxed to a pool of 20 hosts.

These results were derived from a demo environment. They could have been different when derived from another test setup. We advise everyone who uses these data to carry out their own performance tests.